- | 11:51 am

In AI age, spread of disinformation poses big threat to democracies

As major democracies, including India, the UK, and the US go to polls over the next two years, large AI models that use deep learning may worsen disinformation issues, especially in nations with lower digital literacy

On 10 May, a day after former Pakistan prime minister Imran Khan was arrested in a corruption case, a picture of him in custody went viral. Indian news outlets seized this image, using it to depict a frustrated Khan. The only problem was that the image was not authentic – it had been generated using an AI tool called ‘Midjourney.’

The episode highlights the dangers of AI and how creative tools can be exploited to spread disinformation. The conversation around AI as a futuristic technology with unlimited potential often obscures the many dangers it presents.

A growing number of AI specialists warn about AI’s role in escalating disinformation – the intentional sharing of false data to mislead or harm, according to fact-checking organization Poynter. The primary victim is politics, and consequently, democracy. They argue that AI has simplified disinformation distribution.

“The larger picture of disinformation and AI presents a significant threat to the stability of our society. Disinformation, fueled by AI-based programs, has the potential to cause far-reaching damage,” Jameela Sahiba, senior program manager at The Dialogue, a Delhi-based think tank focusing on emerging tech, says.

The capability of AI in generating human-like content, which, according to Jameela, is evident in language models, raises concerns about the authenticity of information and the potential for AI-generated content to further propagate disinformation.

A key risk of AI-driven disinformation is its impact on political culture, particularly during elections. Major democracies, including India, the UK, and the US, are scheduled to go to polls over the next two years.

On May 28, Indian wrestlers, who were protesting against alleged sexual harassment by Wrestling Federation of India (WFI) president Brij Bhushan Sharan Singh, were stopped en route to the newly inaugurated Parliament. Subsequently, two different versions of a selfie taken by one of the protesting wrestlers began to circulate on Twitter.

One of the pictures showed the wrestlers smiling in a police van after being detained. The picture went viral with the claim that the wrestlers were not serious about the protests. The source of the image is unknown, but critics of the wrestlers shared it widely to undermine their protest against Singh, a member of parliament from the ruling Bharatiya Janata Party (BJP). Singh, who has denied the allegations, has been charged by Delhi Police with assault, sexual harassment, and criminal intimidation, and the matter is now pending before a court.

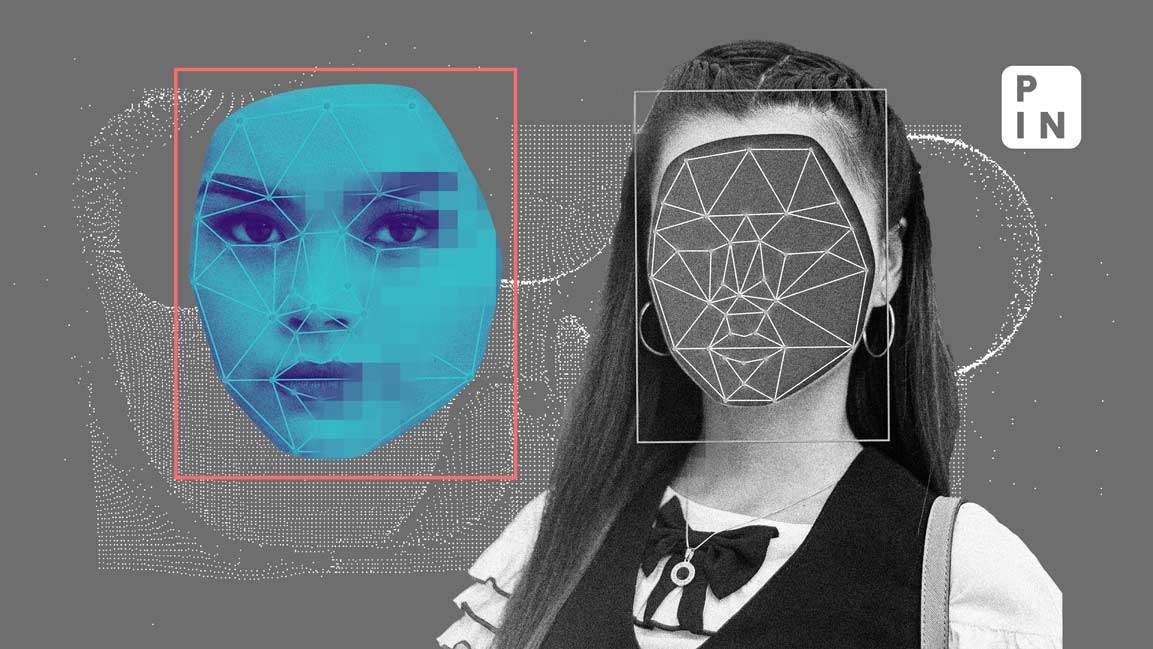

Fact-checkers eventually debunked the manipulated image. Uzair Rizvi, a fact-checker with Agence France-Presse (AFP), explains how the image was edited using FaceApp.

“I browsed the internet for more images but didn’t find any. Then I looked at the image closely – Vinesh has a dimple on her right cheek in the image, but she doesn’t have one in real life. I ran the pic through a facial expression alteration app and the results were similar,” Rizvi says.

When it comes to the impact of AI on the spread of disinformation, both fact-checkers and tech experts have repeatedly expressed concerns.

Large AI models that use deep learning and massive data sets could worsen disinformation issues in countries with lower digital literacy, like India.

AI-driven disinformation

The image of Khan in custody was made by the generative AI program Midjourney, “an independent research lab exploring new mediums of thought and expanding the human species’ capacity for imagination.”

There are similar tools that can churn out artificial images. Former US president Donald Trump fell victim to disinformation this March when AI-generated photos depicting his arrest went viral. While Trump has been indicted under various offenses, he had not been arrested as viral images suggested.

While this is a new phenomenon and has gathered steam with increasingly sophisticated AI tools, ‘deepfake’ software, which was used to target wrestlers, has been around for some time.

“Deepfakes” are media manipulated by AI, replacing one person’s appearance or voice with another’s to create a convincing illusion.

Such technology isn’t just used for mischief; it can have serious financial consequences. A scammer in northern China cheated someone out of 4.3 million yuan (about $0.6 million) by using deepfake technology to impersonate the victim’s close friend, leading to a large money transfer into the scammer’s account.

A similar incident was reported in Arizona, US, where a mother was led to believe that her daughter had been kidnapped. Using AI to clone her voice, scammers pretending to be kidnappers demanded $1 million as ransom but finally settled at $50,000.

In India, media reported this month an incident where Radhakrishnan, a septuagenarian, was scammed via a deepfake WhatsApp video call. Believing the scammer to be a former colleague, Radhakrishnan transferred ₹40,000 to help with a supposed family medical emergency.

A study by cybersecurity firm McAfee shows that 47% of Indian adults have experienced or know someone who’s been a victim of an AI voice scam. A significant 83% of these victims suffered financial loss, with almost half losing more than ₹50,000.

Experts say voice-cloning technology has advanced to the point where a person’s tone and speaking style can be recreated from the smallest soundbites.

Some AI tools can create and spread false information. For example, AI models like generative pre-trained transformers (GPT) can churn out written texts, which could be used to create fake news articles or blog posts. These can sway public opinion and are often spread through websites, social media, or emails.

Some AI-driven bots on social media can push particular narratives or spread false information. These bots can automatically generate and post content, engage with users, and manipulate discussions to influence public opinion. Recommendation algorithms, on the other hand, can inadvertently promote false information by suggesting engaging but misleading content.

Democracy at risk

AI has the potential to bolster democracy, if it doesn’t undermine it first, say analysts.

Ritumbra Manuvie, a law lecturer and senior researcher at the University of Groningen in the Netherlands expresses concern over AI’s impact on information sharing, especially the use of deepfakes and large language models (LLMs).

“There is a growing concern about how AI, specifically deepfake technologies and LLMs, will impact information architecture,” Manuvie says, adding that the primary worry lies in the mass production of propaganda “and our limited ability to discern the truth.”

According to a study by Deeptrace, an Amsterdam-based cybersecurity company, the number of deepfake videos climbed from 15,000 in December 2018 to over one million by February 2020. The majority of deep fake videos are created for pornographic purposes, the report said, adding that 96% of all the deep fake videos they found were non-consensual pornography featuring women.

AI tools are also being used on a much bigger scale, in espionage and international propaganda. Manuvie gives the example of Operation Jorge, an Israel-based global disinformation unit, which allegedly meddled in the elections of various countries. The investigation by an international consortium of reporters earlier this year exposed the dangers of such tools in the hands of powerful companies that use disinformation as a weapon.

“Similarly, several people have expressed concerns about the use of large language models in spreading pro-Vladimir Putin disinformation in Europe,” says Manuvie.

AI has the potential to play an increasingly significant role in political communication and campaigns, as it offers capabilities for data analysis, targeted messaging, and automation. It provides an opportunity to analyze extensive datasets to understand voter behavior, shape messaging, and target specific voter segments.

AI generative tools can be cleverly deployed in elections – whether to spread disinformation against an opponent or to spread propaganda. The use of deepfake content in political campaigns was recently condemned by the American Association of Political Consultants as a violation of its ethics code.

Dr. Justin E. Lane, founder of social media analytics firm CulturePulse and an AI expert, expresses his fear about political misinformation spread by parties who also control education systems. “These parties bear the responsibility to educate the public about facts and truth so that they are less susceptible to disinformation,” Lane says.

AI developers are aware of the challenges and some are already working on them. Sam Altman, CEO of OpenAI, the company that created the popular AI chatbot ChartGPT, spoke before the US Congress in May about the impending problems the development of AI presents.

Altman highlighted his concern about AI being used for disinformation, particularly in influencing elections.

Experts suggest that AI companies should prioritize designing products that uphold human rights principles. Despite the struggle to regulate AI globally, companies must focus on long-term stability and social responsibility.

To combat AI-led disinformation, Jameela suggests three steps: establishing transparency and accountability, developing and enforcing ethical guidelines, and implementing fact-checking and data verification systems.

Midjourney and OpenAI did not respond to emails requesting their comments on the reported misuse of their respective tools.